` tags.

2. **Migrate to a New Chat**:

* Click **Edit** on the summary, copy the text.

* Start a **new chat**, paste it under the greeting like this:

<plot>

【Previously】

[Paste summary here]

</plot>

then you can continute to chat with the AI in a new chat.

→ Fresh chat = lower token count, same narrative flow!

**Method 2: Pin Critical Plot Points**

* **You can add** pivotal details to your Persona (e.g., "The protagonist is allergic to roses"). This acts as a "cheat sheet" for the AI, hence reducing reliance on memory.

***

### FAQ

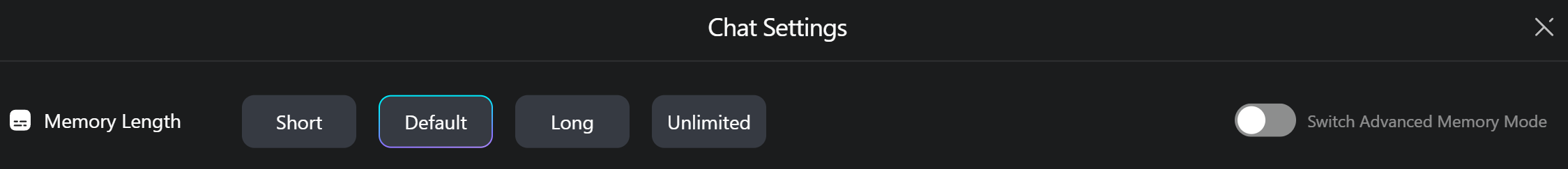

#### 1. If I’ve only chatted for 2 rounds but set memory to 100 rounds, will I be charged extra?

**A:** No. Costs are calculated based on the **actual word count processed by the AI**, not your memory length setting.

***

#### 2. What happens if my chat exceeds 200 rounds?

**A:** Our **context search model** ensures relevance, even with limited memory settings. For example:

* If you chat 200+ rounds but only set memory to 30, the AI will prioritize retrieving *critical early context* (e.g., family details from the first few rounds) over less relevant later exchanges.

* The AI still recalls key details without needing ultra-high memory limits.

***

#### 3. Why did my Ruby usage suddenly spike?

**A:** Increasing memory length when in a long conversation will *dramatically increase the input tokens* and the *price*. Example:

* Starting at 8 rounds → raising to 20 rounds forces the AI to process **20 rounds’ worth of text** instead of 8.

* More tokens = higher Ruby costs. Adjust the slider cautiously during long conversations!

---

# Agent Instructions: Querying This Documentation

If you need additional information that is not directly available in this page, you can query the documentation dynamically by asking a question.

Perform an HTTP GET request on the current page URL with the `ask` query parameter:

```

GET https://rubii.gitbook.io/rubii-tutorials-for-ai-chat/chat-settings/memory-settings.md?ask=

```

The question should be specific, self-contained, and written in natural language.

The response will contain a direct answer to the question and relevant excerpts and sources from the documentation.

Use this mechanism when the answer is not explicitly present in the current page, you need clarification or additional context, or you want to retrieve related documentation sections.